In this project, we envisage video-sharing services for live user video streams, indexed automatically and in realtime, by shared physical content. For example, all streams focussing at a player in football stadium are grouped together.

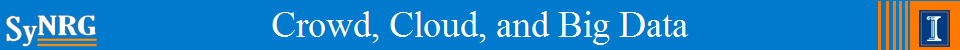

If You See It, Swipe towards It: Crowdsourced Event Localization

This project aims to leverage crowdsourcing to locate events that are difficult to be monitored by surveillance cameras due to coverage and cost issues. The event in consideration is smoking.

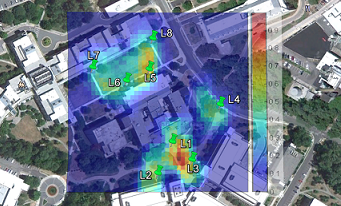

Helping Mobile Apps Bootstrap with Fewer Users

Increasing number of mobile apps require smartphone sensory inputs to infer user behavior, activity, or context. Obtaining labeled training data for these applications is a "chicken-egg" problem. This paper proposes a cloud based architecture to solve this problem.

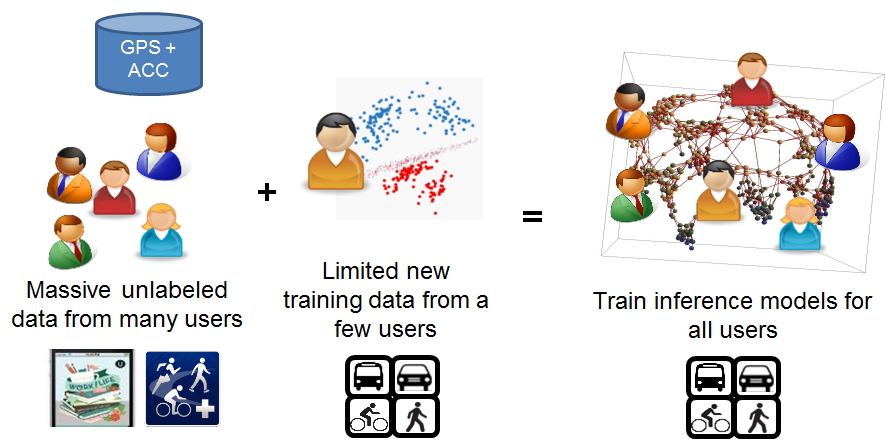

MoVi: Mobile Phone based Video Highlights via Collaborative Sensings

This project envisions a social application where smartphones collaboratively sense their ambience and recognize socially interesting events. The phone with a good view of the event triggers a video recording, and later, the video-clips from different phones are stitched to form highlights.

Micro-Blog: Sharing and Querying Content through Mobile Phones and Social Participation

This project identifies challenges and presents an architecture of system Micro-blog -- which uses multi-dimensional sensory inputs to enable high-resolution, people centric view of our surroundings.

People

|

Romit Roy Choudhury |

|

Puneet Jain |