Millimeter Wave Networks

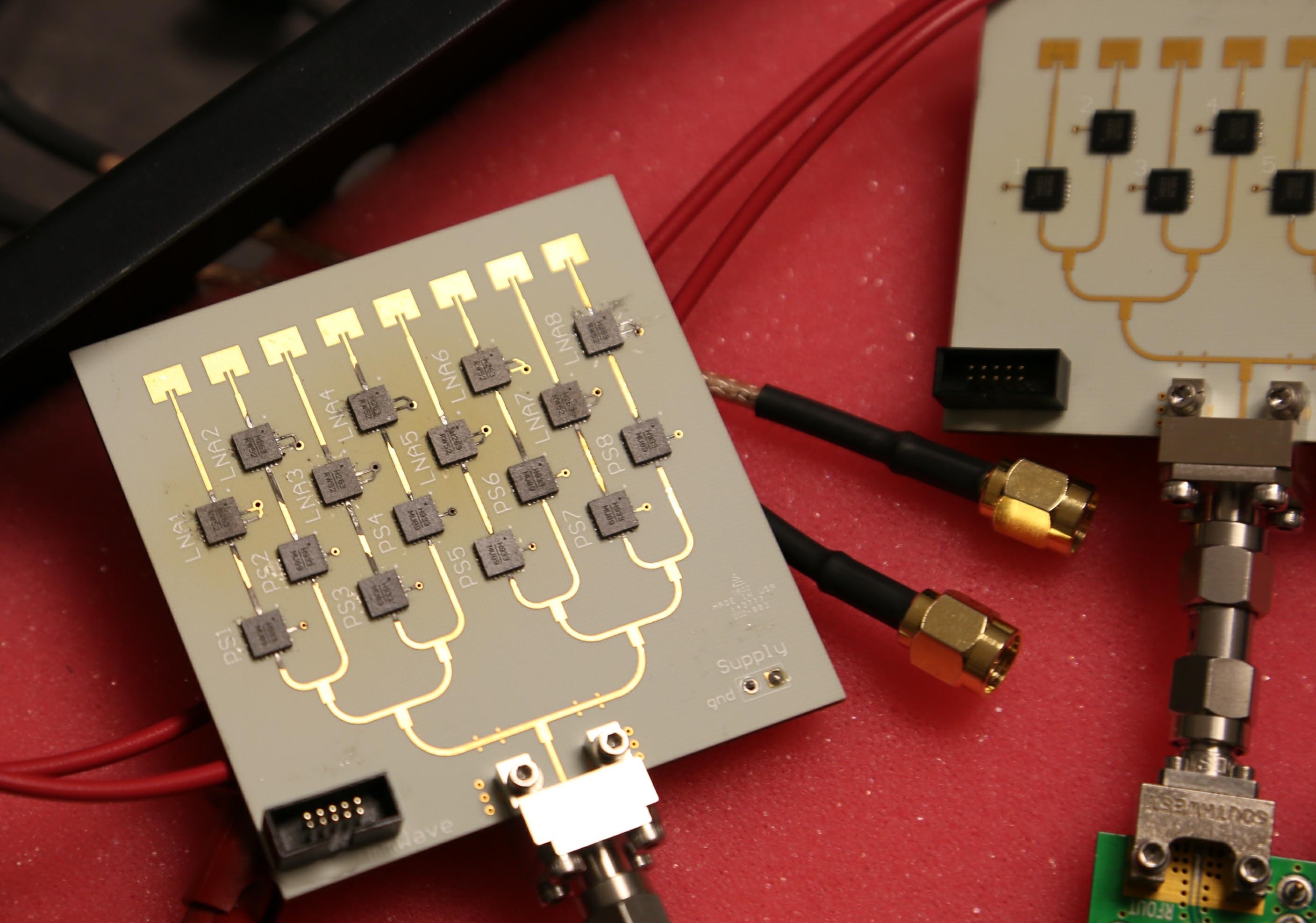

Millimeter wave (mmWave) technologies promise to revolutionize wireless networks. However, they have to use highly directional antennas to focus their power on the receiver. We have designed the first mmWave beam steering system that finds the correct beam alignment without scanning the space. We also built MiRa, a full-fledged mmWave radio with phased arrays capable of beam steering.

Physical Vibration

We envision physical vibration as a new modality of data communication. As an example, we have enabled touch-based vibratory communication between vibration motors and microphones/accelerometers in today's smartphones. We design vibratory radio at the PHY and MAC layers, and explore a few possible applications in authentication, device to device streaming, and tabletop communication.

Drones and Robots

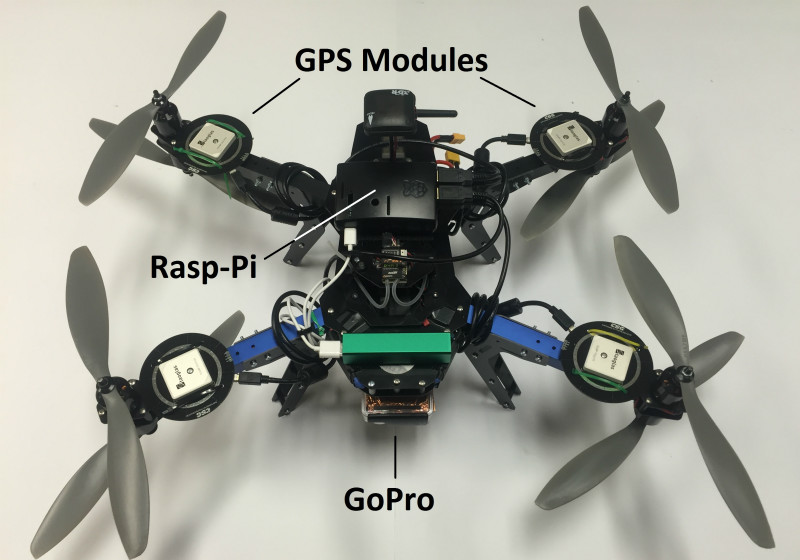

There is growing agreement that wireless capacity (at the PHY and MAC layers) is reaching saturation. Many believe that the next “jump” in network capacity will emerge from new ways of organizing networks. This project considers the possibility of physically moving wireless network infrastructure to improve/optimize desired performance metrics. For example, we envision WiFi access points on wheels that move within a small region to exploit the multipath nature of wireless signals; we also envision drones flying into high demand areas, hovering at strategic locations, and serving as cellular proxies to ground clients. This project is a foray into the landscape of such "robotic wireless networks".

The Sparse Fourier Transform: Theory

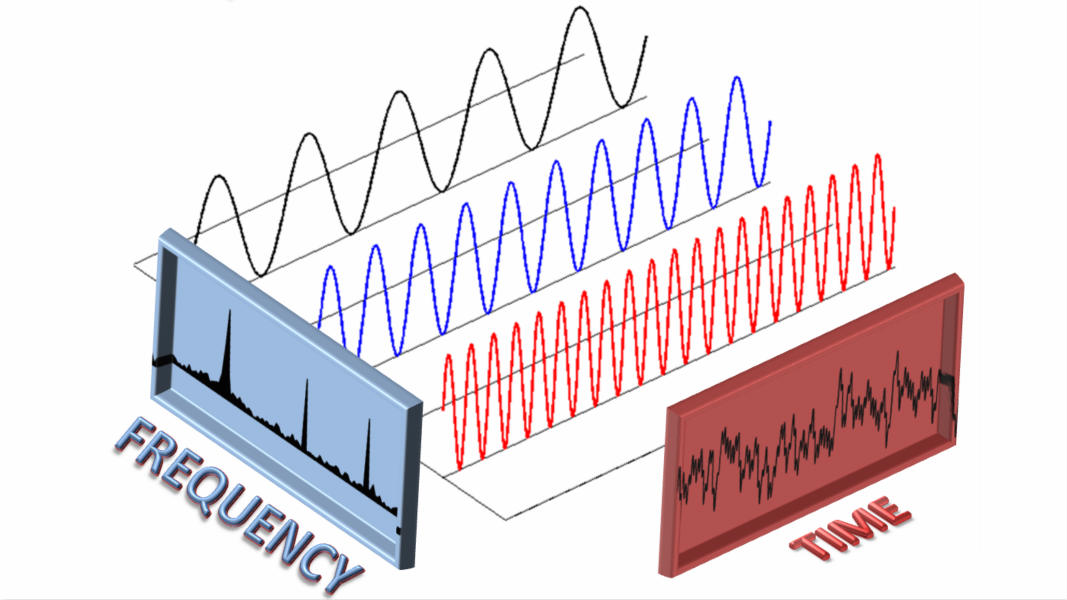

For signals of length n that are (approximately) k-sparse in the frequency domain, i.e., can be approximated by k non-zero frequency coefficients, the sparse Fourier transform computes the Fourier transform in sublinear time, faster than FFT and using less input samples.

The Sparse Fourier Transform: Applications

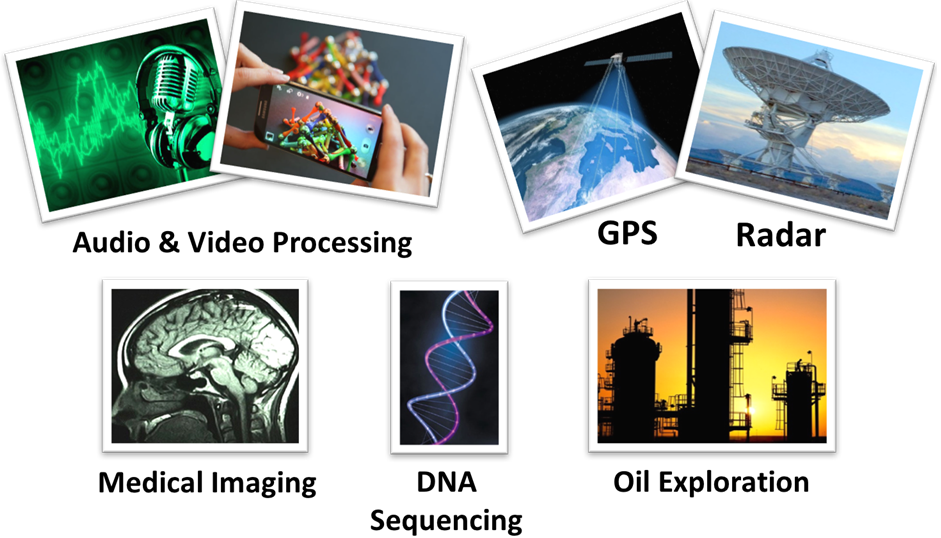

We have demonstrated the impact of SFFT on several practical applications, including GHz-wide spectrum acquisition, GPS, light-field photography, magnetic resonance imaging (MRI), biomolecular nuclear magnetic Resonance (NMR), and radio astronomy. We have also built a chip that delivers the largest Fourier Transform to date for sparse data.

Augmented Reality

The idea of augmented reality - the ability to look at a physical object through a camera and view annotations about the object - is certainly not new. Yet, this apparently feasible vision has not yet materialized into a precise, fast, and comprehensively usable system. We aim to build a ready-to-use mobile AR system, and we adopt a top-down approach cutting across smartphone sensing, computer vision, cloud offloading, and linear optimization. For instance, we have designed selective cloud-offloaded computer vision under the constraints of today's smartphones to improve mobile agumented reality experience.

Acoustics and Voice

We have shown that by utilizing hardware nonlinearities, inaudible signals (at ultrasound frequencies) can be designed to be audible to microphones. This can empower an adversary to stand on the road and silently control Amazon Echo and Google Home-like devices in people’s homes. We further push the range of this attack to be as far as 25 feet, limited by the power of our amplifier. We also develop a defense against this class of voice attacks that exploit non-linearity, which only require software changes at microphone.

Motion Tracking in Sports

We have recently started to explore the possibility of bringing IoT to sports analytics, a thriving industry in which motion patterns of balls and players are being analyzed for coaching and predictions. We intend to decompose and track the motion of the ball, racquet, arm and body, with inexpensive IoT sensors and radios. The core problem pertains to statistical decomposition, motion tracking, signal processing and sensor fusion. We have developed a first system to track the 3D trajectory and spin parameters of a Cricket ball, with IMU sensors and UWB radios embedded in the ball.

Gesture Recognition

We have been pushing the frontier of activity and gesture recognition. For instance, we have been tracking arm and finger movements through smartwatch sensors, with applications to sports coaching, healthcare, and natural user interfaces (3D mouse). The core problems are at the boundaries of embedded system, statistical inference, and signal processing. Our results thus far are promising (see ArmTrak video), but we still have many exciting problems to solve.

Localization

We aim to develop different technologies to bring indoor localization into reality. These technologies are built upon motion signal processing, sensor fusion, human motion analysis and wireless sensing. For instance, we have proposed an unsupervised indoor localization scheme that bypasses the need for war-driving. We have also designed various techniques to improve localization accuracy, including reliable walking direction detection with smartphones, more precise localization using PHY layer information or user spinning, and Wi-Fi labeling with picture matching. We also applied localization to object positioning.

RFID Networks

We have been developing solutions to make RFID networks more secure and reliable. For instance, we propose to prevent eavesdropping on RFID data by randomizing the signal and the wireless channel. We also design systems that use an external wearable device equipped with a full-duplex radio to secure transmissions to and from the medical implant. We improve reliability and efficiency of low power backscatter networks by modeling uplink transmissions as if performed by a single virtual sender and treating collisions as a sparse rateless code across the nodes.

Side Channel Attacks

We reveal various kinds of side channel attacks on today's mobile and wearable devices. For example, we demonstrate that sensor data from smart watches can leak information about what the user is typing on a regular (laptop or desktop) keyboard. We find the vibration motor in mobile devices can eavesdrop. We reveal that each smartphone accelerometer responds differently to the same motion stimulus and can serve as a fingerprint of smartphones. We also show that the location of screen taps on modern smartphones and tablets can be identified from accelerometer and gyroscope readings.